MacGillivray Law is Investigating Legal Action for Teens Against Meta and Google

Managing Partner Angeli Swinamer has directed the firm to investigate potential legal action on behalf of young Atlantic Canadians harmed by social media platforms — and that investigation begins by hearing from the families most affected.

Powerful technology companies can be held to account for the design choices they make.

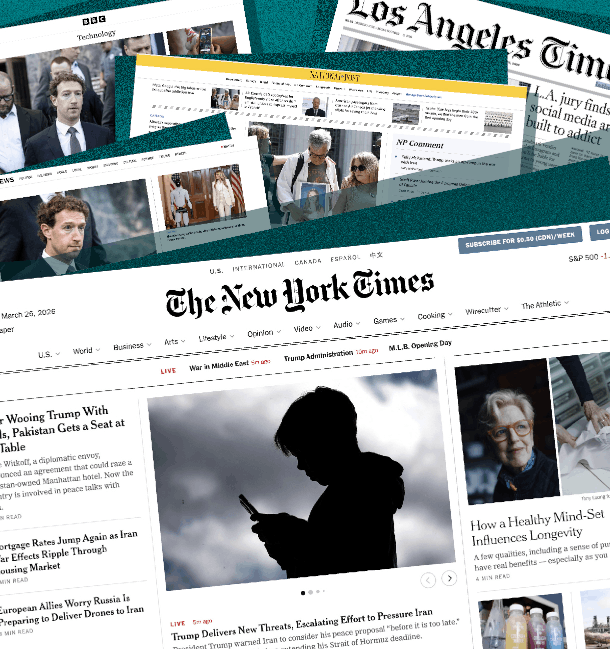

These platforms are not neutral tools. They are engineered to capture attention, deepen dependence, and draw young users back — again and again — even as the companies behind them have known for years that this design causes real harm. The product was built that way on purpose.

Litigation does more than pursue compensation after the fact. It forces evidence into the open. It tests whether safety was treated as a genuine priority or deployed as a talking point. Civil litigation, at its most effective, drags internal decisions into daylight — and that transparency matters.

Whistleblowers have been critical in establishing what these companies knew. Frances Haugen, a former Meta employee, told the United States Senate that Meta’s products harm children and that company leadership was aware of how to make its platforms safer — and chose not to. Her testimony was not speculation. It was corroborated by Meta’s own internal research.

That research, disclosed in 2021, contained a striking admission: “We make body image issues worse for one in three teen girls.” Internal findings also linked excessive use to increased anxiety and depression in young people. The company had this information. It continued to optimize for engagement regardless.

The data from public health research points in the same direction. The Public Health Agency of Canada study found that adolescents with problematic social media use had 2.64 times the risk of high psychological distress and 3.45 times the risk of emotional problems, alongside materially lower life satisfaction compared to low-risk peers. The study identified a clear gradient: as problematic use increased, so did the risk of poor mental health outcomes.

None of this is an argument against technology, or a suggestion that young people are without agency. People are free to post, connect, learn, and create — and technology, used well, enriches lives.

The real question is a narrower and more specific one: should companies be permitted to engineer products that make it genuinely difficult for children and teenagers to disengage, to the sometimes serious detriment of their mental health? That is not primarily a question about free expression. It is a question about product design — and about accountability.

At their best, lawsuits produce reforms that should never have required litigation: clearer warnings, safer default settings, genuine age safeguards, and platforms built with children’s welfare as a design consideration rather than an afterthought. They can also provide meaningful recourse for those who have already been harmed.

MacGillivray Law is currently surveying Atlantic Canadians to better understand the scope of this issue. If your child has experienced serious psychological harm connected to addiction to these platforms, we invite you to share your experience with us.

All submissions are held in strict confidence. We are not requesting your child’s name at this stage — only some general details about what occurred. Submitting this form does not establish a solicitor-client relationship, but every submission will be reviewed and responded to.

Have questions for our team?

Request a

Free Consultation

If you would like to learn your legal options at no obligation, contact us today to set up a free consultation.